Making Cybersecurity Policy Work In Practice

April 7, 2026

I did not come to cybersecurity through policy (probably no one does, anyway). Actually, I will admit I took an interest in them; policies, standards and frameworks. Also, my initial thoughts about the field of Cybersecurity were “oh, cybersecurity is all about hacking” or maybe analysing systems, logs, configurations, or perhaps like the CTFs I got to try, where we simply had to find a flag. However, I now know there is a lot more to it, and I am equally aware that there is such a high potential area in cybersecurity, known as Governance, Risk and Compliance. I believe it is very exciting!

In the early stages of my training, I observed that security can be considered to be concrete: A system was either vulnerable or it was not. Moreover, evidence was something that could be traced, captured, and reasoned about, and there were quite a good number of tools for the job.

Over time, nonetheless, I began to notice a different kind of problem emerging, one that could not be solved by better tooling alone, and changing the initial narrative of the “simple problems” because now we moved from sandbox environments to real, practical ones.

I believe the problem is not a lack of security controls but clarity on just what needs to be done.

As I progressed in my studies and internships, this gave me exposure to knowledge about environments where, for the most parts it was a close call between being compliant and being technically accurate. In practice, the best companies are those that strike a balance based on their objectives.

Some organisations will struggle to answer the question: Are we doing what the policy actually expects us to do? And naturally, this question could appear repeatedly in different forms:

Sometimes it could surface during audits, other times during discussions on how to manage the incident response plan, and on other instances when teams try to reconcile what they believe is compliant with what regulators might later decide.

I will admit, this gap between written policy and lived practice is what drew me toward governance-focused cybersecurity work.

From my research, I notice that the European cybersecurity policy, especially in its current evolution, is ambitious and very relevant. Instruments such as the NIS2 Directive, the Cyber Resilience Act, and the Cybersecurity Act aim to raise baseline security across sectors that are increasingly interconnected and increasingly exposed. Nevertheless, ambition alone does not guarantee effectiveness. Besides, policies are written in legal language for good reasons, but those same reasons often make them difficult to operationalise, the nightmare most organisations face. Certainly, when requirements are abstract, organisations interpret them differently. When interpretation varies, consistency disappears.

What could be observed is that many organisations do not fail at cybersecurity because they are careless; far from that. They fail because they are uncertain. They are unsure how to translate policy language into system configurations, monitoring practices, reporting thresholds, or organisational procedures. This uncertainty is amplified for smaller organisations, which may lack dedicated governance teams or legal expertise, as these are additional costs they prefer not to bear, unless something happens. In such cases, compliance becomes reactive rather than continuous, and security becomes something that is demonstrated occasionally as opposed to being a daily or a much more frequent practice.

This is where the idea of Policy-as-Code began to resonate with me. Although I have no practical experience in implementing it, I have been learning more about it and find it quite zeitgeisty.

To put it plainly, I believe Policy-as-Code is not a radical reinvention of regulation; it is a translation exercise. At its core, it asks a practical question: What if policy requirements could be expressed in a way that systems can evaluate, without stripping away human oversight? In technical domains, configuration, infrastructure, and security rules are already treated as artefacts that can be versioned, tested, and reviewed. Policies, by contrast, often remain static documents that are read infrequently and interpreted inconsistently.

My interest lies in exploring how selected cybersecurity policy requirements could be expressed as structured, testable rules that support continuous assurance. As a matter of fact, this does not mean reducing legal obligations to simplistic checks. Rather, it means identifying areas where policy intent can be supported by technical validation. For example, requirements around access control, logging, incident readiness, or system hardening can often be linked to observable conditions. When those conditions are unmet, organisations receive early signals in place of post-incident surprises.

During my work on AI security and system assurance, I saw how valuable continuous validation can be. Systems change frequently, configurations drift, and teams rotate. Consequently, when assurance relies on static documentation, it can quickly become outdated. Meanwhile, automated checks, when carefully designed, offer a way to maintain alignment between intent and implementation. They also create traceability, something that both regulators and organisations value.

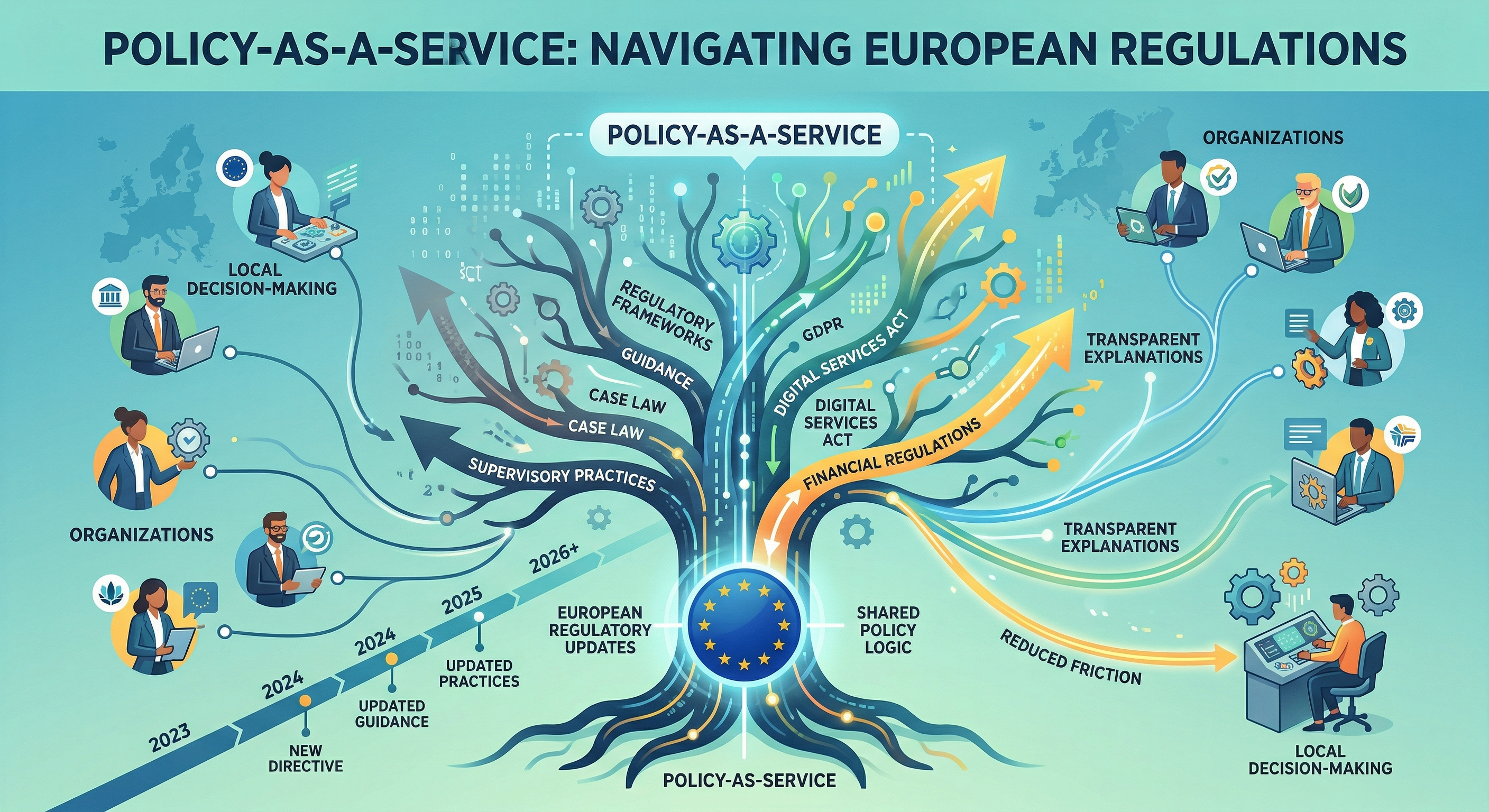

Building on this idea, “Policy-as-a-Service” introduces an additional layer that is particularly relevant in the European context. Simply put, regulatory frameworks evolve, and guidance is constantly being updated. In addition, interpretations shift as case law and supervisory practices mature. Expecting every organisation to track and interpret these changes independently is unrealistic. A shared service that maintains policy logic, updates it responsibly, and provides transparent explanations could significantly reduce friction without centralising decision-making authority.

Naturally, I do not imply that such a service would replace internal responsibility. Instead, organisations would still be full owners of their risk decisions. Even so, they would benefit from consistent policy representations, clearer reporting structures, and guidance that reflects current regulatory expectations. For supervisory bodies, this approach could also improve comparability and reduce the burden of interpreting heterogeneous compliance narratives.

What matters deeply to me in this discussion is the role of human judgment. In my view, automation in governance must not become a black box, and therefore Policy-as-Code should support decision-making, not obscure it. Systems must be explainable to technical teams, auditors, and non-technical stakeholders alike. Trust in governance systems depends on understanding how conclusions are reached and where their limits lie; it is important, infact necessary to make that clear.

This concern is shaped by my broader exposure to security research, including work in areas such as system behaviour analysis and trustworthiness. Whether examining model robustness, system integrity, or compliance readiness, the same principle applies: tools are only as effective as the confidence people place in them. The evidence suggests that confidence is built through transparency, not through complexity for its own sake.

I am drawn to this area of work because it sits at a meaningful intersection. It is technical enough to require rigour and precision, yet human enough to demand empathy for how organisations actually operate. It requires understanding systems, but also understanding people, incentives, and institutional constraints, knowing just how different every organisation is. That combination is what makes cybersecurity governance challenging, and what, in my opinion, also makes it worth pursuing.

Ultimately, my motivation is not exactly to add another framework or layer of abstraction. It is to help reduce uncertainty. To help organisations understand what is expected of them, how they can demonstrate it, and how they can improve before failures occur. Policy, when implemented thoughtfully, can be an enabler instead of being an obstacle. Bridging the gap between policy text and operational reality is one way I am convinced to make that possible.

This is the kind of work I want to do. Work that is careful, grounded, and genuinely useful. Work that respects both the intent of regulation and the realities of technical systems. And work that contributes, in a small but palpable way, to a more resilient and, of course, secured digital environment.